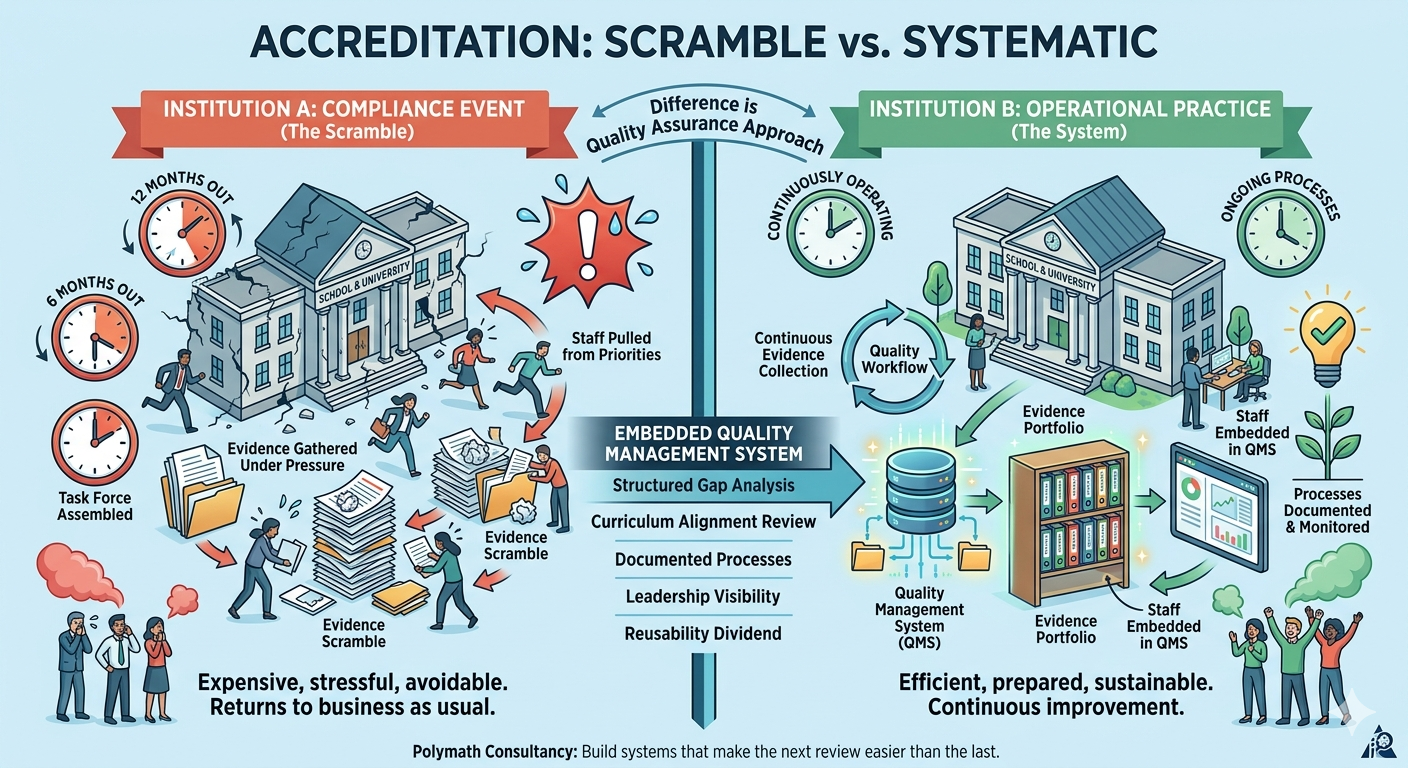

There is a pattern that plays out in schools and universities across the world with remarkable consistency. An accreditation review is scheduled. Twelve months out, or sometimes six, leadership realizes how much work is required. A task force is assembled. Staff are pulled from other priorities. Evidence is gathered under pressure. Gaps are closed at the last minute. The review passes, or requires a follow-up, or surfaces findings that are difficult to address quickly. Everyone exhales and then the institution returns, more or less, to business as usual.

Two years later, the cycle begins again.

This is an expensive way to manage institutional quality. It is also entirely avoidable.

What accreditation is actually measuring?

The accreditation bodies that matter, IB, Cambridge, KHDA, NEASC, CIS, and their counterparts across different regions, are not primarily looking for evidence of perfection. They are looking for evidence of systematic quality management. They want to see that the institution has embedded processes for monitoring and improving its own performance, that curriculum is aligned to recognized learning outcomes frameworks, and that there is a functioning accountability structure for academic quality.

A school that scrambles to produce evidence before a visit is demonstrating the opposite of what an accreditor wants to see. An institution that produces evidence because it has been collecting evidence continuously, as part of how it operates, is demonstrating exactly what the accreditor is looking for.

The difference between those two institutions is not intelligence or ambition. It is whether quality assurance is treated as a compliance event or as an operational practice.

The gap analysis nobody wants to do early

The honest starting point for any accreditation preparation is a structured gap analysis mapped against the specific standards of the accrediting body. Not a general review. A mapped assessment of where the institution is currently in compliance, where it is in partial compliance, and where the gaps are significant enough to require sustained attention and can be considered very strong starting point.

Most institutions resist doing this early because the findings could be uncomfortable. Gaps surface that leadership would prefer not to have documented. Evidence that should exist, doesn’t. Processes that are described in policy documents aren’t happening in practice.

These are exactly the findings that need to surface eighteen months before a review, not three weeks before. The gap analysis, when done properly and early, converts an accreditation review from a high-stakes event into a managed process with a known action register and enough time to close what matters.

Curriculum alignment is not a one-time exercise

One of the most consistently underserved areas in institutional quality management is curriculum alignment. Most schools complete a curriculum review as part of their initial accreditation and then treat it as done. Standards evolve. Programmes are added. Teachers change. Assessment design drifts and the alignment that existed at the last review may not exist at the next one.

A rigorous curriculum alignment review checks the current state of the programme against the relevant learning outcomes framework, assesses the coherence of sequencing and assessment design across year levels and subjects, and produces a gap closure plan with specific recommendations. The output is not just accreditation-ready documentation. It is a clearer, more coherent academic programme that benefits students regardless of what any external review concludes.

What a quality management system actually does?

The institutions that handle accreditation most calmly are the ones that have built a functioning quality management system into their operations. Not a binder of policies, live system with defined review cycles, documented evidence processes, clear staff roles in quality assurance, and leadership visibility of performance against standards.

A QMS like this changes the relationship an institution has with accreditation permanently. The review becomes a reporting occasion rather than a test. Evidence exists because it was always being collected. Staff are prepared because quality processes are part of their working environment. When the review is over, the institution doesn’t lose the momentum it built, because the system was never designed for the review. It was designed for the institution.

The Reusability Dividend

One aspect of well-structured accreditation preparation that is consistently undervalued is the reusability of what gets built. An evidence portfolio with a clear documentation architecture, a self-study report with strong structural foundations, assessment instruments that have been validated, these don’t disappear after one review cycle. They become the baseline for the next one. Each cycle should require significantly less effort than the previous one, because the systems are already running.

That reusability is the long-term return on investing in quality management properly. Not just a successful review, but a permanently lower cost of compliance.

Polymath Consultancy guides schools and universities through the full accreditation journey, from initial gap analysis to embedded quality management systems that make the next review easier than the last. Speak with our team at polymathconsultancy.com/contact-us to start with a structured assessment.